Ship AI with Laravel: Search Entire PDFs with Zero Search Logic

Last updated on by Harris Raftopoulos

▶️ Watch the video tutorial (12 minutes)

In Episode 5 we built semantic search from scratch with embeddings and pgvector. Great for FAQ articles and full control over the data. But what if your documentation is bigger than that? Hundreds of pages of policies, product manuals, full PDFs?

In this episode we use the other approach. Upload your docs to the AI provider, let them handle the chunking and embedding, and use the SDK's FileSearch tool to query it. No similarity math on our side. Just upload and search.

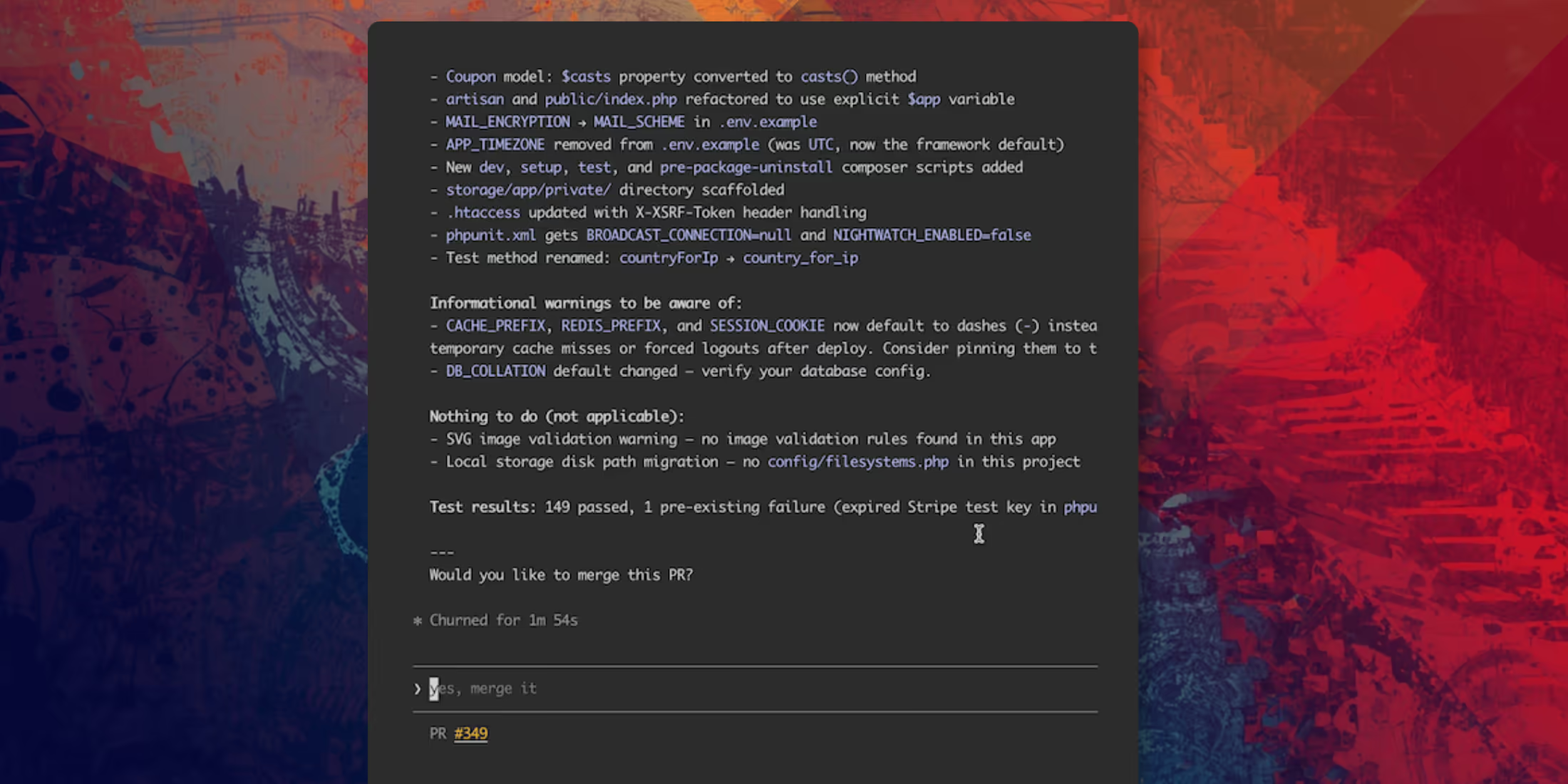

I build an Artisan command that creates a vector store called "SupportAI Knowledge Base" and uploads five markdown documents covering return policy, shipping, billing FAQ, account security, and product warranty. The store ID gets saved to .env and config so the rest of the app can reference it.

We add FileSearch to the support agent alongside the KnowledgeSearch tool from Episode 5 so the agent has both options. Then we update the instructions so it picks the right one. KnowledgeSearch for quick FAQ-style questions, FileSearch for detailed policy lookups.

I test it with a question about damaged items and the agent pulls the exact 48-hour reporting requirement from the actual policy doc. Then I throw a combined question with an order number and a policy question, and the agent uses OrderLookup and FileSearch in the same response.

One thing to know before you ship this to production: FileSearch is token-heavy and requires billing credits with the provider since they're hosting and querying the vectors for you.

Next episode we're building the real-time streaming chat UI with Livewire so customers can actually talk to the agent through a proper interface.