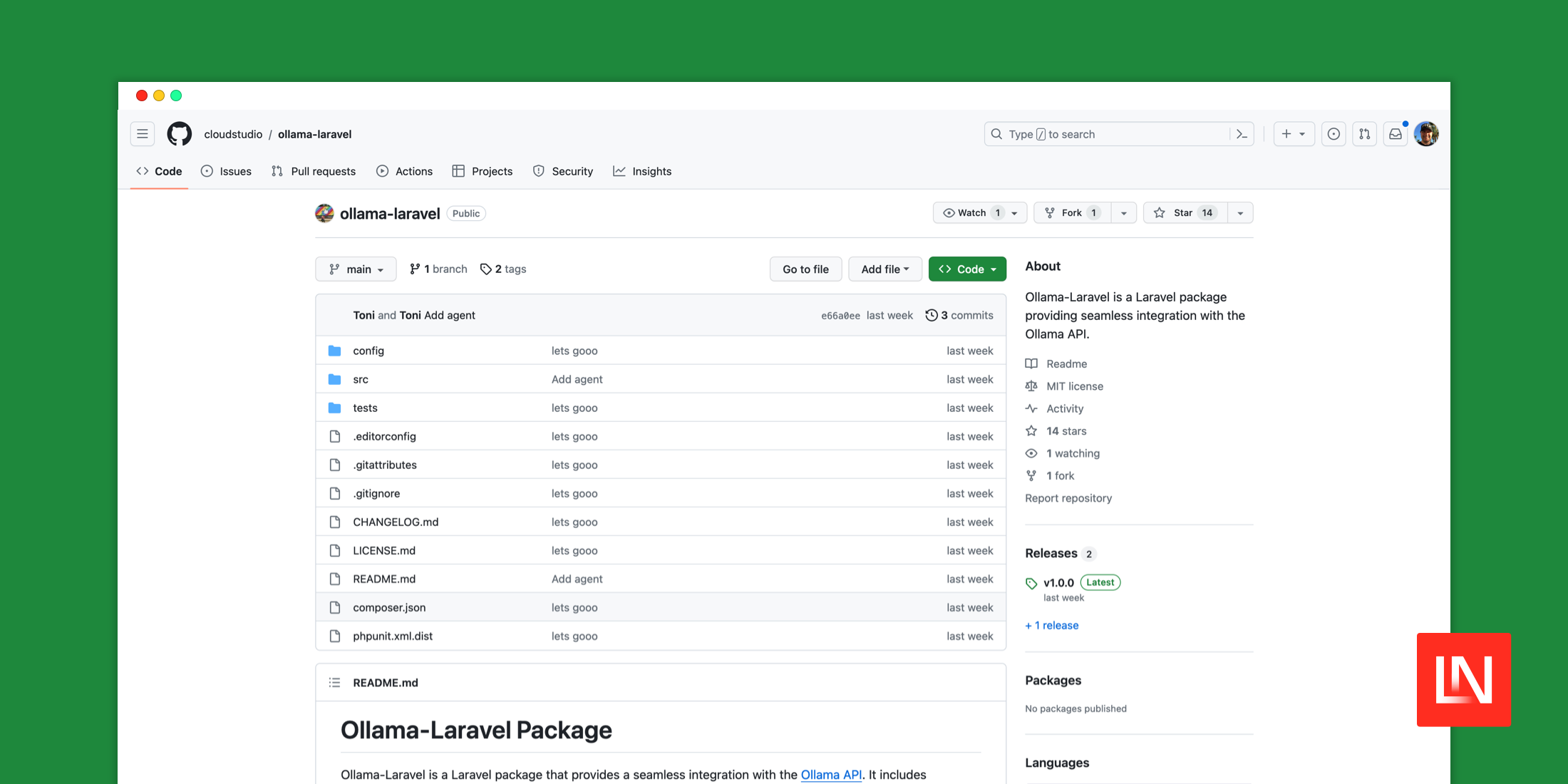

The Ollama Laravel package provides seamless integration with the Ollama API:

Ollama-Laravel is a Laravel package that provides a seamless integration with the Ollama API. It includes functionalities for model management, prompt generation, format setting, and more. This package is perfect for developers looking to leverage the power of the Ollama API in their Laravel applications.

Access the powerful Meta LLaMA: A foundational, 65-billion-parameter language model locally and interface with it using Laravel. You can access various models such as llama2, openchat, starcoder (code generation model trained on 80+ languages), sqlcoder, and other models trained in medical, psychology, and more. This is an excellent way for developers to get experience with large language learning models locally!

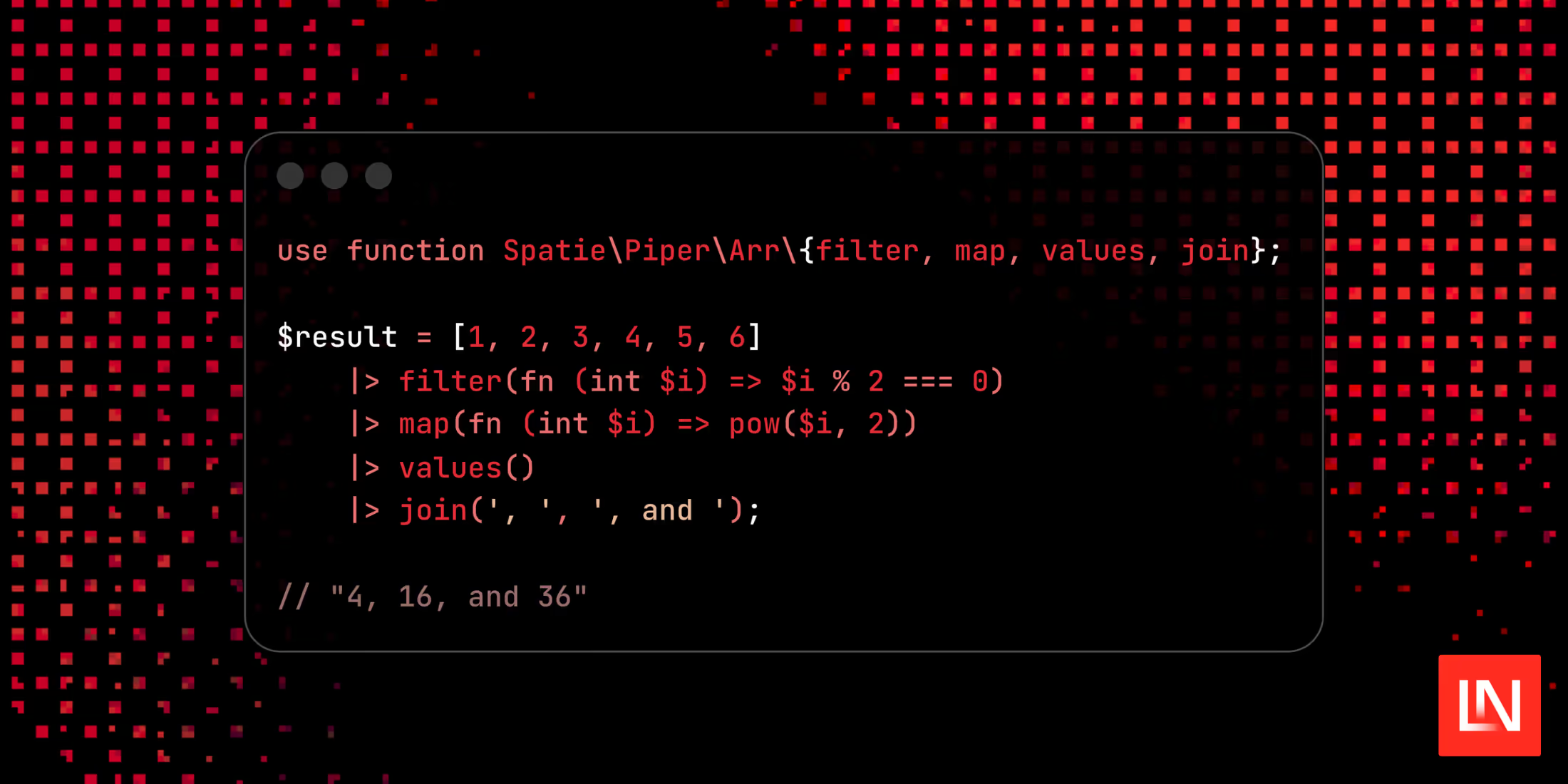

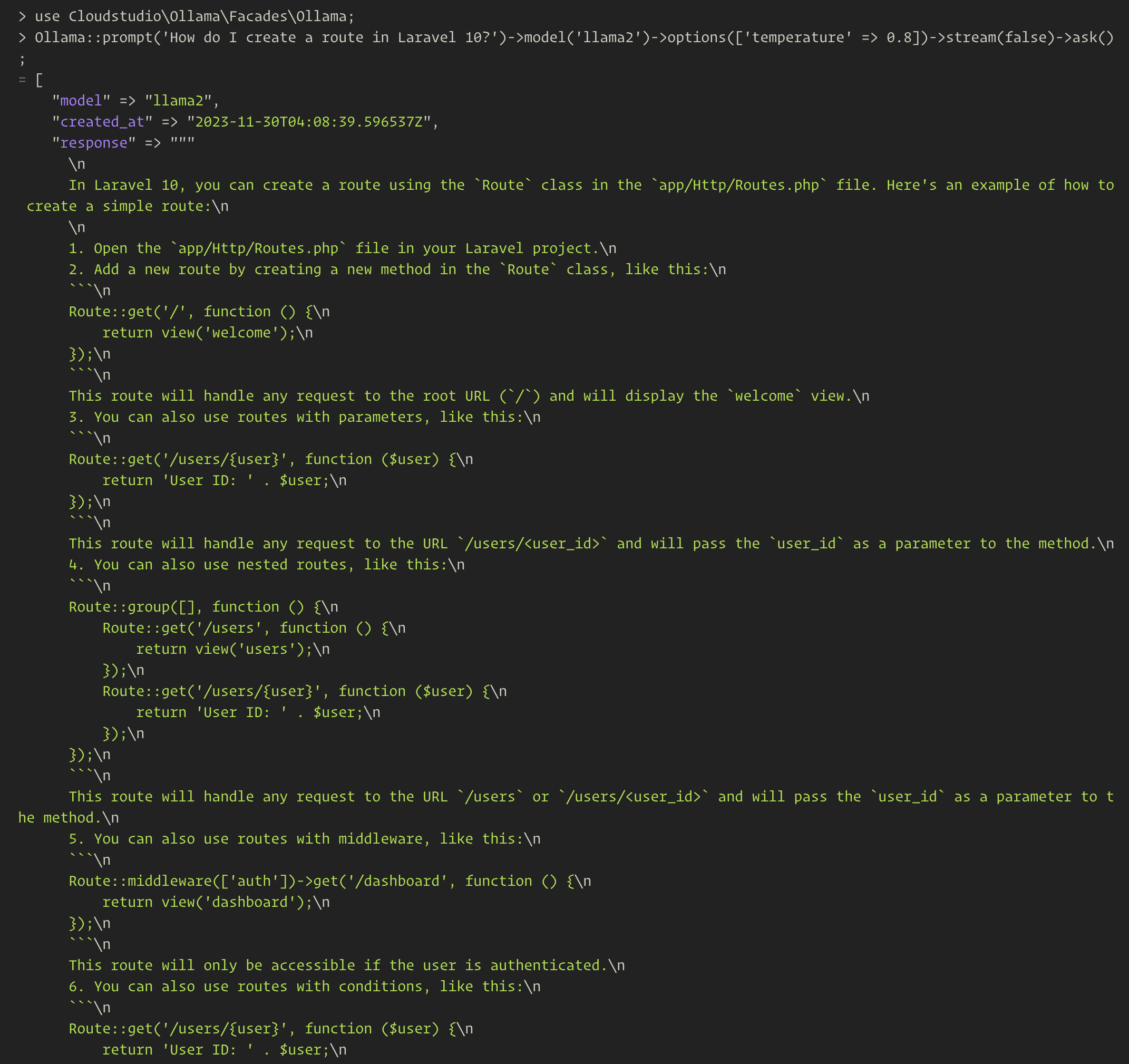

Once you install this package in Laravel, you can use the package's Ollama facade to interact with the model of your choice. Here's an example of a typical exchange with a language model:

use Cloudstudio\Ollama\Facades\Ollama; $response = Ollama::prompt('How do I create a route in Laravel 10?') ->model('llama2') ->options(['temperature' => 0.8]) ->stream(false) ->ask();Here's an example response from a tinker shell I tried with it:

As you can see it hasn't given me an accurate answer, but it's neat that I can experiment with language models locally on my own machine 💪

Currently, Ollama is supported on macOS and Linux, with Windows coming soon. You will need to Download Ollama and install it to use this package. Depending on the model you want to run, downloading and installing might take some time. You also have the option to create an account and share your own models.

You can learn more about using Ollama Laravel, get full installation instructions, and view the source code on GitHub.