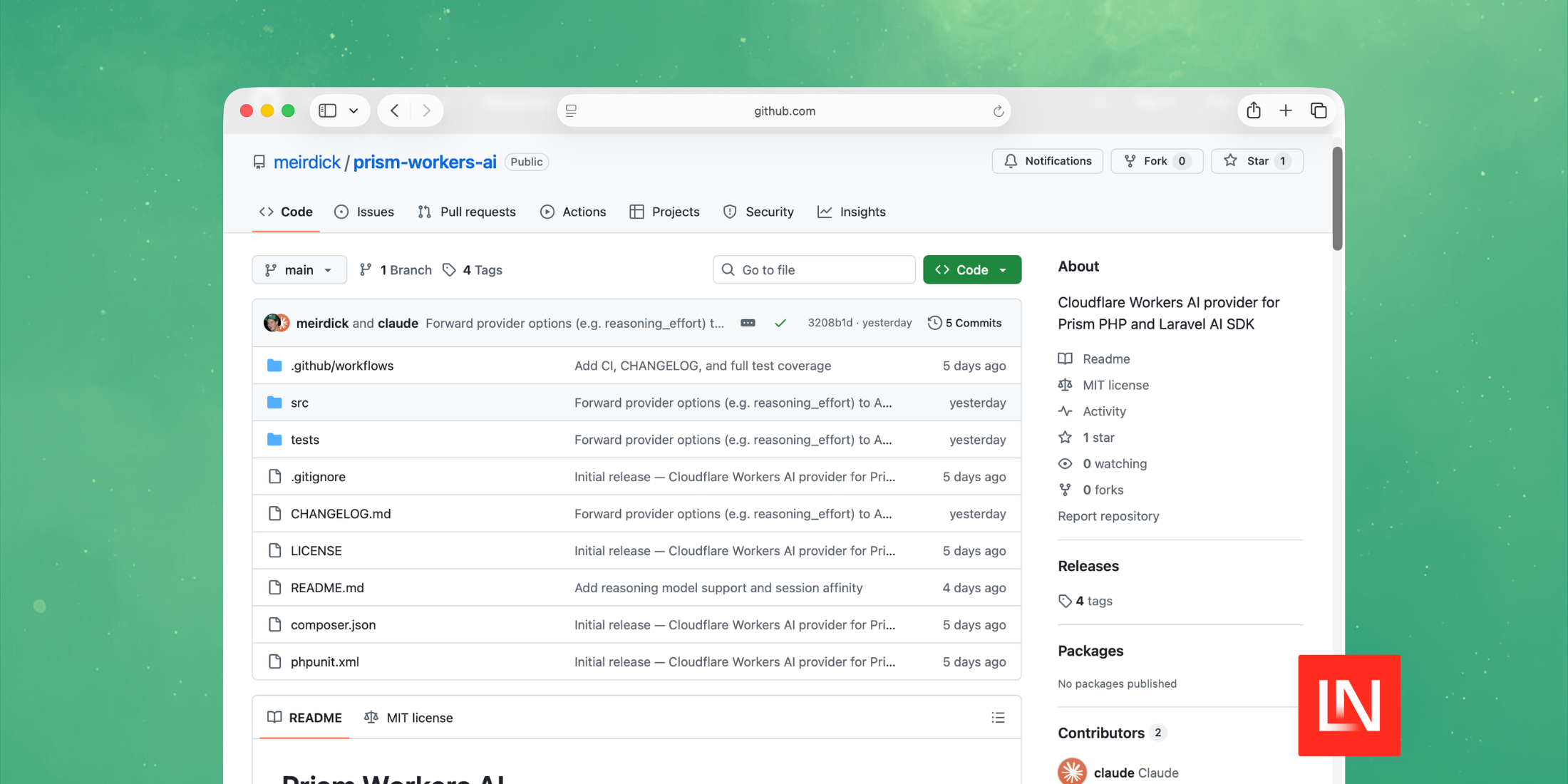

Prism Workers AI is a Cloudflare Workers AI provider for Prism PHP and the Laravel AI SDK. It routes requests through Cloudflare's AI Gateway /compat endpoint.

- Generate natural language text, structured outputs, and stream responses.

- Create vector representations using Cloudflare embedding models.

- Automate tool execution with support for complex, multi-step workflows.

- Extract and utilize reasoning content from supported reasoning models.

- Maintain conversation context using session affinity for prompt prefix caching.

- Compatible with Prism PHP and Laravel AI SDK's agent() helper.

Text Generation and Streaming

Generate text using any Workers AI model through Prism's fluent API:

use Prism\Prism\Facades\Prism; $response = Prism::text() ->using('workers-ai', 'workers-ai/@cf/meta/llama-3.3-70b-instruct-fp8-fast') ->withPrompt('Hello!') ->asText();Streaming works the same way:

$stream = Prism::text() ->using('workers-ai', 'workers-ai/@cf/meta/llama-3.3-70b-instruct-fp8-fast') ->withPrompt('Tell me a story') ->asStream();Embeddings

Unlike Prism's built-in xAI driver, this package supports embeddings:

$response = Prism::embeddings() ->using('workers-ai', 'workers-ai/@cf/baai/bge-large-en-v1.5') ->fromInput('Hello world') ->generate();Tool Calling

The package supports tool calling with multi-step workflows. Workers AI requires content to always be present on assistant messages, even when empty during tool call responses — this package handles that automatically:

$response = Prism::text() ->using('workers-ai', 'workers-ai/@cf/meta/llama-3.3-70b-instruct-fp8-fast') ->withTools([$weatherTool]) ->withMaxSteps(3) ->withPrompt('What is the weather?') ->asText();Reasoning Models with Thinking Content

The package extracts reasoning content from reasoning models such as Kimi K2.5. The reasoning chain is available alongside the final response:

$response = Prism::text() ->using('workers-ai', 'workers-ai/@cf/moonshotai/kimi-k2.5') ->withMaxTokens(2000) ->withPrompt('What is 15 * 37?') ->asText(); $response->text; // "555"$response->steps[0]->additionalContent['thinking'];// "The user is asking for the product of 15 and 37..."When streaming, you receive thinking events before the text output:

$stream = Prism::text() ->using('workers-ai', 'workers-ai/@cf/moonshotai/kimi-k2.5') ->withMaxTokens(2000) ->withPrompt('Explain briefly why the sky is blue.') ->asStream(); foreach ($stream as $event) { // ThinkingStartEvent, ThinkingEvent (deltas), ThinkingCompleteEvent // then TextStartEvent, TextDeltaEvent, TextCompleteEvent}Set withMaxTokens(2000) or higher when using reasoning models, since reasoning tokens count against the limit.

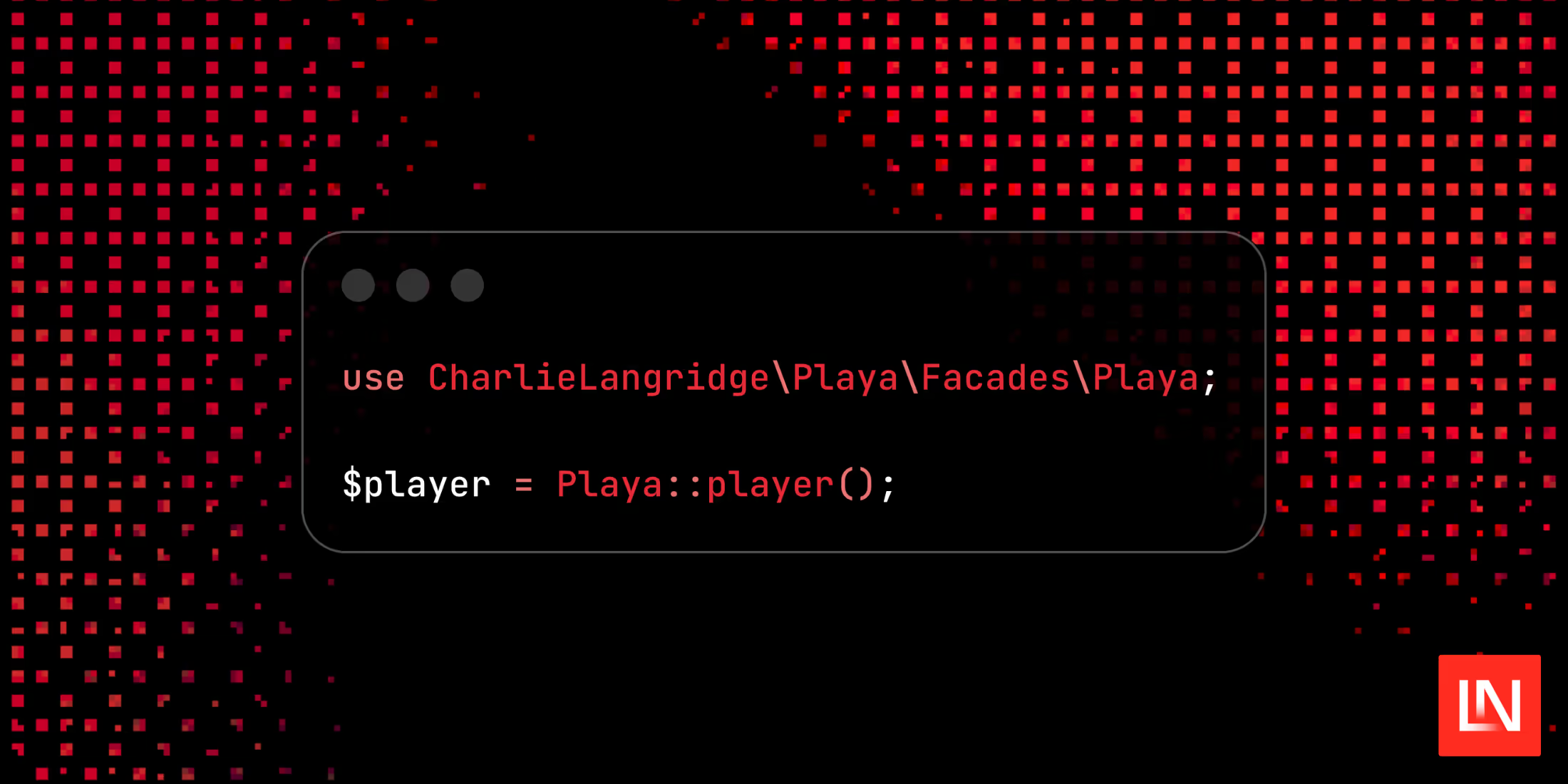

Laravel AI SDK Integration

If you're using laravel/ai, the package also registers a workers-ai driver:

use function Laravel\Ai\agent; $response = agent(instructions: 'You are a helpful assistant.') ->prompt('Hello!', provider: 'workers-ai');You can learn more about this package on GitHub at meirdick/prism-workers-ai.

Note: the package requires PHP 8.2+ and Prism PHP ^0.99, with optional support for Laravel AI SDK ^0.3.